The Challenge

The first time I saw this graphic I thought “Yes, this nails it!”. After participating in dozens of point-to-point integration projects throughout my career, I have felt the pain of this style of integration first hand. Having to manage and maintain old code base, having to test and patch systems each upgrade, having to comb through sketchy documentation, that I probably wrote, if I was lucky enough to have any, and having to troubleshoot issues when one integration point ‘falls through the cracks’ and breaks a core component of the system.

Point-to-point integrations

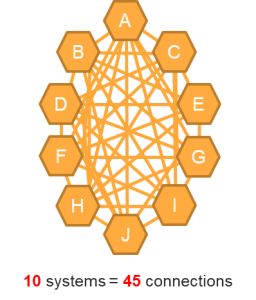

Point-to-point integrations are often one-off solutions used when you only have a few components to connect. Unfortunately, most IT organizations have learned the hard way that point-to-point integration becomes an unmanageable web of complexity, a nightmare to upgrade and almost impossible to scale when you want to add or integrate additional systems into the enterprise.

I encourage you to think about all the possible connections within your system and between your business applications. Perhaps not with the intention of integrating all these systems today, but to understand the interconnectivity of your data. What systems have duplicate data? Where is your data used? Who is updating and maintaining specific data fields? Think of the example of municipal address, this piece of data can exist across many of your core business applications; permitting and licensing, garbage collection, 311, asset management, recreation management, tree inventory, and so on…

Imagine if this data element was a separate entity in each of your applications. Think of the data duplication, the data irregularities, the outliers, the miscoding, the misformatting, the user input error etc. Trying to wrangle each of these addresses in each application would be a nightmare.

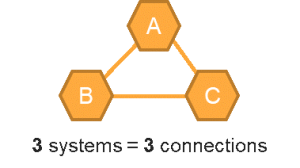

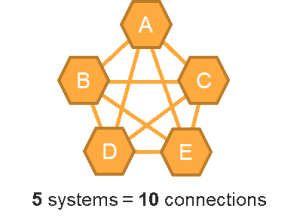

When approaching an integration project, as you add more applications and platforms, the required connections increase exponentially. Connecting two or three systems seems simple enough but increase that to 5 systems and you will see a doubling of connections, and 10 systems will require 45 or more connections. Keep in mind, each of these connections is built independently, they may not be documented, they need to be maintained across version upgrades and become part of your technical debt.

A typical local government agency has between 60 and 250 systems that support its overall business. Many of these exchange information through file transfers or integrations.

Many point-to-point integrations are undocumented, posing real risks and difficulties when it is time to upgrade, staff turnover, or when you want to switch out one of the connected systems. As your system grows in size and complexity, each one of these connections creates a single point of failure to the entire system.

The Solution

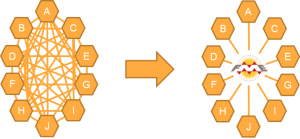

A better option does exist; leveraging an enterprise service bus (ESB) to break the number of dependent connections and implement a communication system between connection endpoints. As an added benefit, an ESB decreases the per-system integration costs as you move beyond the first three systems.

Enterprise Service Bus (ESB)

Many spatial data-centric organizations, such as local government agencies, already have a tool in use that will enable high-level communication between applications, Safe Software’s FME Server. In addition to providing message routing and queuing, the core of an ESB model, FME allows for data translation, data validation and quality checks, and spatial decision making. This enables your organization to leverage in house expertise, existing infrastructure and tools and keep valuable intelligence in house.

Check this out

I encourage you to watch the following webinar that our Director of Solution Delivery, Neil Hellas, co-hosted with Safe Software, makers of FME Server. This webinar outlines the concept of FME server as an ESB.

https://www.safe.com/webinars/integrating-enterprise-event-driven-messaging-using-fme-server-esb/

Want to discuss your integration challenges and brainstorm potential solutions in person? Book a free consultation with Spatial DNA: